- #APACHE HADOOP INSTALLATION ON LINUX HOW TO#

- #APACHE HADOOP INSTALLATION ON LINUX INSTALL#

- #APACHE HADOOP INSTALLATION ON LINUX UPDATE#

The final line here means that whatever you gave as an argument to this localsparksubmit.sh script will be used as a last argument in this command.Ģ. packages :hadoop-aws:2.8.0 the previous section 2.1 for an explanation of these values. packages com.amazonaws:aws-java-sdk-pom:1.10.34 \ In this file, you'll copy/paste the following lines: Create a file called jupyspark.sh somewhere under your $PATH, or in a directory of your liking (I usually use a scripts/ directory under my home directory). We recommend you create a shell script jupyspark.sh designed specifically for doing that.ġ. Running Spark from a jupyter notebook can require you to launch jupyter with a specific setup so that it connects seamlessly with the Spark Driver.

#APACHE HADOOP INSTALLATION ON LINUX HOW TO#

How to run Spark/Python from a Jupyter Notebook This will do nothing in practice, that's ok: if it did not throw any error, then you are good to go. To check if everything's ok, start an ipython console and type import pyspark.

#APACHE HADOOP INSTALLATION ON LINUX INSTALL#

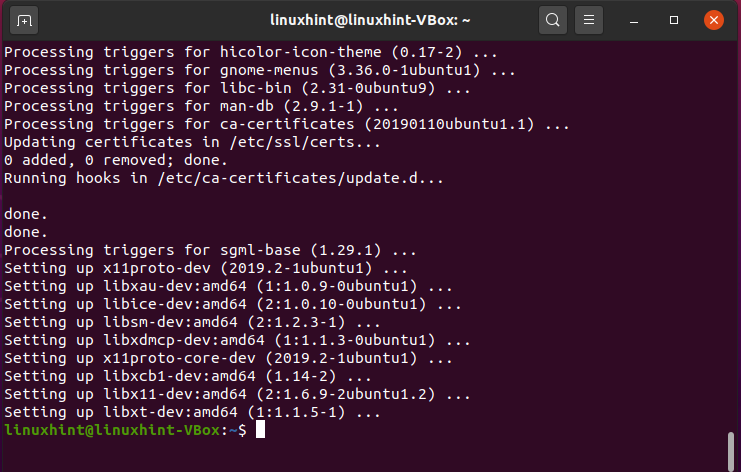

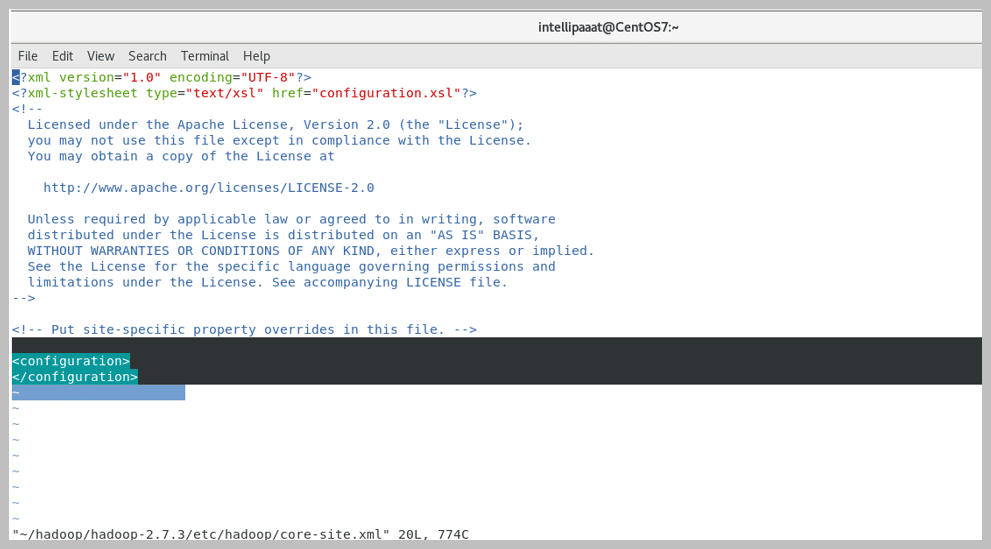

Back to the command line, install py4j using pip install py4j.Ģ. They will be automatically taken into account next time you open a new terminal. bash_profile, for your terminal to take these changes into account, you need to run source ~/.bash_profile from the command line. Check the hadoop installation directory by using the command:Įxport AWS_ACCESS_KEY_ID= 'put your access key here ' export AWS_SECRET_ACCESS_KEY= 'put your secret access key here ' Use brew install hadoop to install Hadoop (version 2.8.0 as of July 2017)Ģ.

#APACHE HADOOP INSTALLATION ON LINUX UPDATE#

Installing Spark+Hadoop on MAC with no prior installation (using brew)īe sure you have brew updated before starting: use brew update to update brew and brew packages to their last version.ġ. This script will install spark-2.2.0-bin-hadoop2.7. NOTE: If you would prefer to jump right into using spark you can use the spark-install.sh script provided in this repo which will automatically perform the installation and set any necessary environment variables for you. We'll do most of these steps from the command line. Use Spark+Hadoop from a prior installation Installing Spark+Hadoop on Linux with no prior installation Installing Spark+Hadoop on Mac with no prior installation Use the Part that corresponds to your configuration: Java Development Kit, used in both Hadoop and Spark. Note: we recommend installing Anaconda 2 (for python 2.7) Very helpful for this installation and in life in general.Ī distribution of python, with packaged modules and libraries. Here's a table of all the software you need to install, plus the online tutorials to do so. Step 2: Software Installation Before you dive into these installation instructions, you need to have some software installed. If you already have an AWS account, make sure that you can log into the AWS Console with your username and password. Step 1: AWS Account Setup Before installing Spark on your computer, be sure to set up an Amazon Web Services account.